Mastodon sidekiq autoscaling with the KEDA operator

This article assumes Mastodon is installed as a containerized setup on top of e.g. Kubernetes (k0s/k3s/k8s), Rancher, Openshift, etc.

Creating a kubernetes cluster or deploying Mastodon on kubernetes via helm is out of scope of this article.

IntroductionProbably any Mastodon administrator who has seen some (or rapid) growth of their instance has experienced the same issue: growing latency of posts, updates from other servers showing up late or not at all, image uploads not getting processed etc.

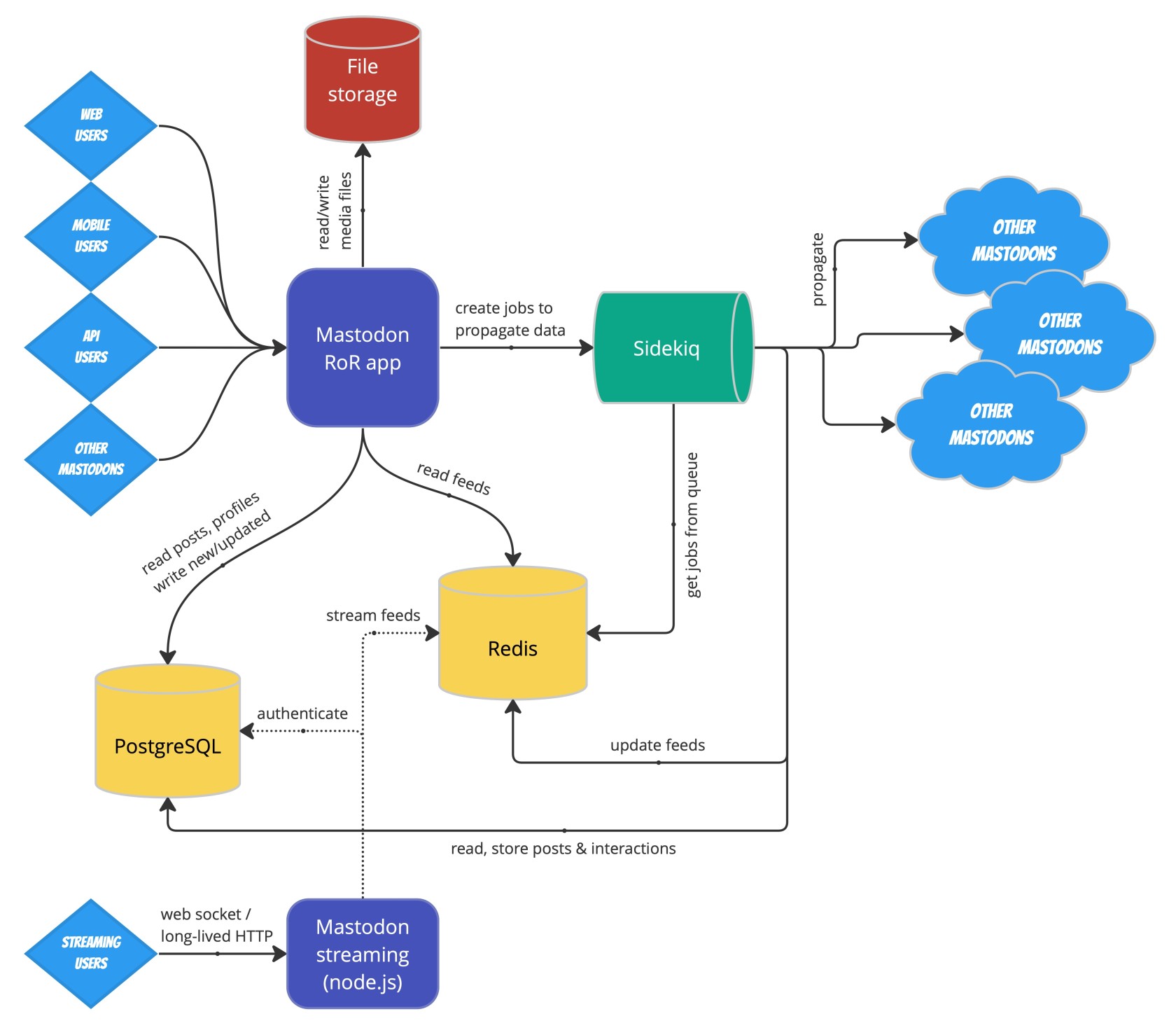

The root of this issue lies in the architecture of Mastodon. Every time a new action (create post, upload image, interact with other instance,...) happens on a Mastodon instance, it is not processed immediately, but put into a job queue. This queue consists of two pieces of software: the Sidekiq job queue and the Redis database.

Depending on how busy an instance is, any default Mastodon setup will most likely run into above mentioned issues as its user base grows, since the default setup of the job queue can only cope with a moderate amount of requests before it will be overwhelmed and the job "pipes" will literally get clogged up. |

User reporting growing latencies (German) |

Architecture overview

Flow of actions ("jobs") through a Mastodon instance, On the right-hand side of the overview we can see the Sidekiq and Redis components. |

Mastodon architecture, courtesy of Softwaremill / Adam Warski (c) 2022

|

Mastodon kubernetes installation

The recommended way of deploying Mastodon on kubernetes is via the official Mastodon helm chart Depending on the chosen configuration of the chart, this will produce several kubernetes objects for all the necessary services needed to run a Mastodon instance.

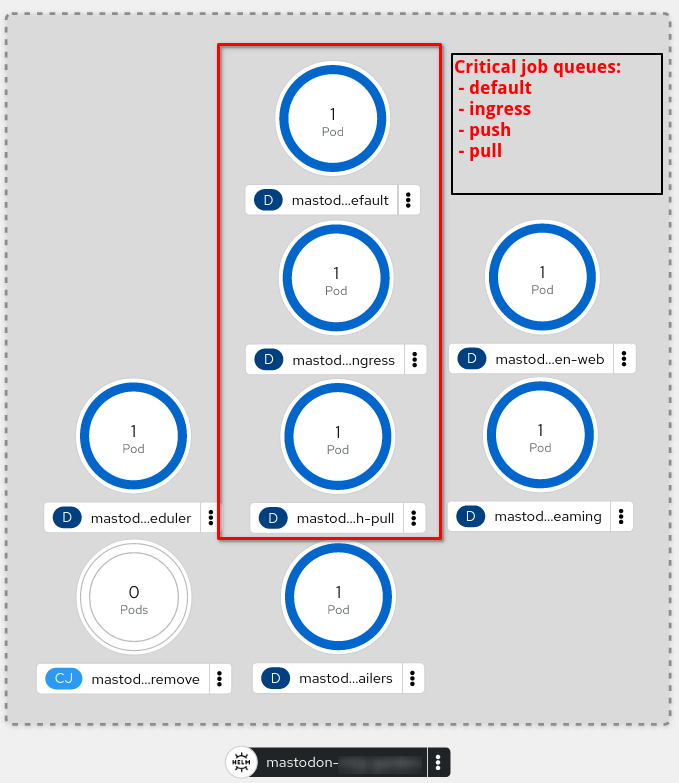

The objects we are interested in for this article are the Sidekiq containers ("pods") which handle different kinds of tasks. again, depending on the helm configuration, those queues can be spread out over multiple pods, e.g.:

See Mastodon's documentation on queues to understand what these do in detail. For now let's focus on the default, ingress and push/pull queues, as those are the most important ones.

Although a single pod can be configured to handle different queues in parallel, it is highly recommeded to separate these pods by queue type to enable the desired scale-out capabilities.

|

Openshift "Developer" view of a Mastodon installation, deployed via helm. Image courtesy of b2c@dest-unreachable.net (c)2023 |

ChallengeNow, depending on the usage pattern of the instance, the time of day and possibly many other factors, different queues will see varying amount of load. Certain events (posts going viral, major news events, etc.) may even cause temporary peak loads that will subside quickly.

Although monitoring solutions can (and should!) be put in place to detect such scenarios, admin interaction will still be required to scale up the deployments of the pods handling the affected queues. This usually leads to over-provisioning - scaling up the different deployments permanently to prep for such situations just in case. This of course can be costly on resources, and is technically not sound. We should be able to do better.

But what if we had something to check the current load of the queue in Redis and scale pods accordingly? Of course we could have the kubernetes built-in

|

See also: |

SolutionAutoscaling Sidekiq pods will create additional connections to the PostgreSQL database. Ensure your connection limit is high enough and/or deploy a connection pool (e.g. PgBouncer).

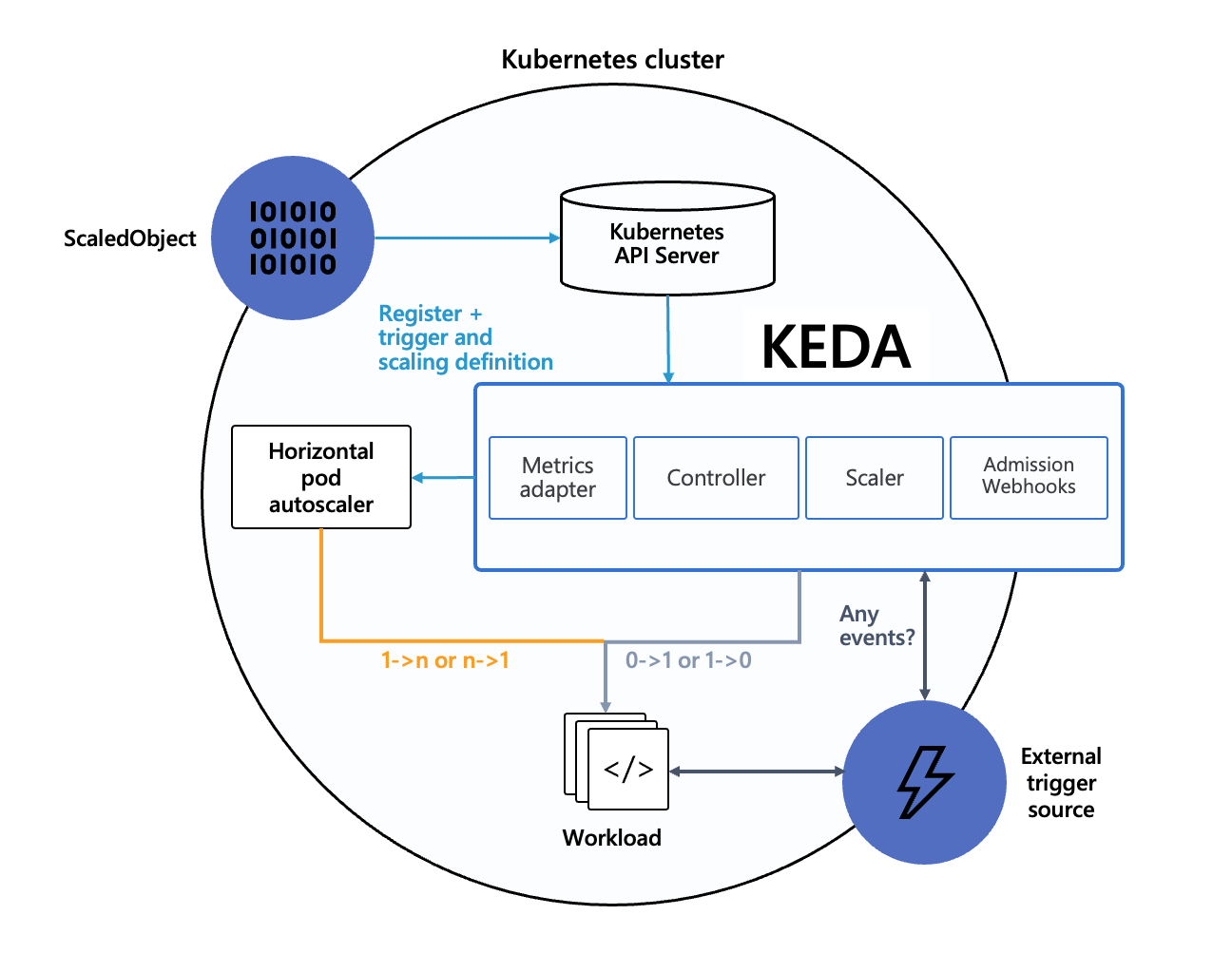

In steps the KEDA operator , an event-driven autoscaler which can collect metrics from resources outside the cluster, and scale pods based on this information.

Specifically, we are interested in the amount of jobs in the Redis queues. Luckily, KEDA has a scaler just for this: the Redis lists scaler, which we will utilize to scale our deployments without any admin interaction necessary.

Sweet! But how does one do it?

|

|

ImplementationTo get this working, we need to get two things done:

|

|

Installing the operator

Installing the operator is straightforward and

|

This has been confirmed working on: |